First in the line of my Home Server/Storage/Lab Setup posts will by my ESXi server. I will list the hardware involved, power usage, etc.

This server will be my third generation of a home VMware ESX(i) server. The first was an AMD Phenom II X4 940 with 8GB of memory in 2009. After that came an Intel Core i5-2500 with 32GB of memory (which is now revitalized as the ZFSguru machine) and the new machine is a Haswell based machine.

The Hardware:

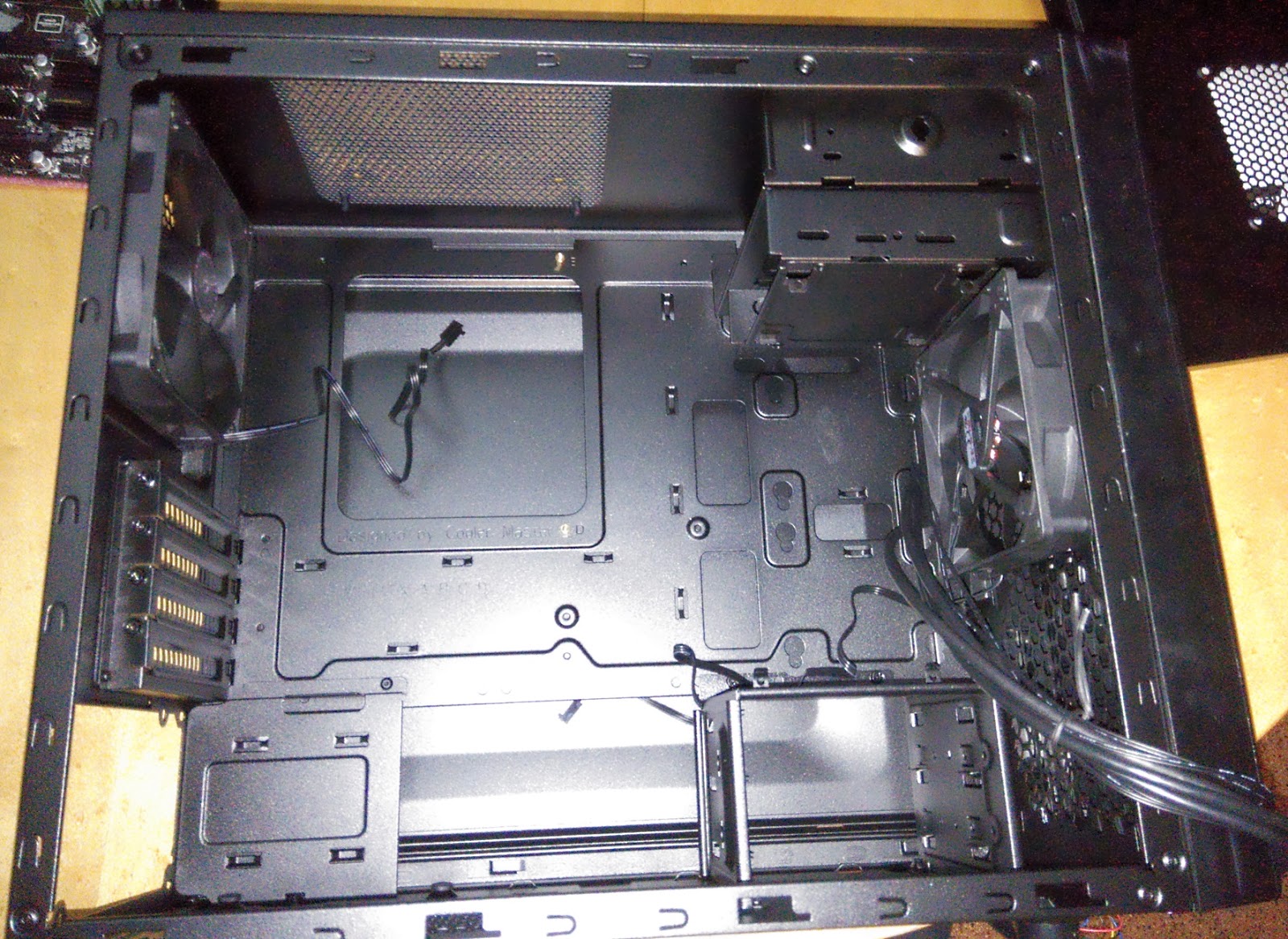

Case: Cooler Master N200 – 34,90E

A cheap case with an overall good design and low weight. Nice and small, but in my case it won’t need to hold any disks anyway. I found the case to be surprisingly well built for the price and am quite happy with it. The two cooling fans aren’t ‘noctua’ quiet but they do seem to provide ample airflow.

PSU: Seasonic SS-850KM (Would have used a Seasonic G-360 – 60,00E)

A completely ridicoulous 850 watt PSU but I had it as a spare and since it’s still a very high-quality gold level PSU I’m hoping the efficiency hit won’t be too bad. If in the future I need a high PSU wattage for anything, I will probably replace it with an excellent SeaSonic G-360 which costs about 60E.

Motherboard: ASRock H87M Pro4

While I am normally an Asus fan as of late I have been trying Asrock motherboard with great results. I had also read several other people having a good experience with this board and their VMware ESXi servers, ESXi is inherently very picky about hardware. The board features the H87M chipset giving us more then enough PCIe lanes for the expansion cards while having 6x SATA600 onboard. Another great feature of this board is having an Intel I217V onboard NIC. It’s not nativly supported by ESXi but there is a community driver which will enable it. This has been working without problems for me.

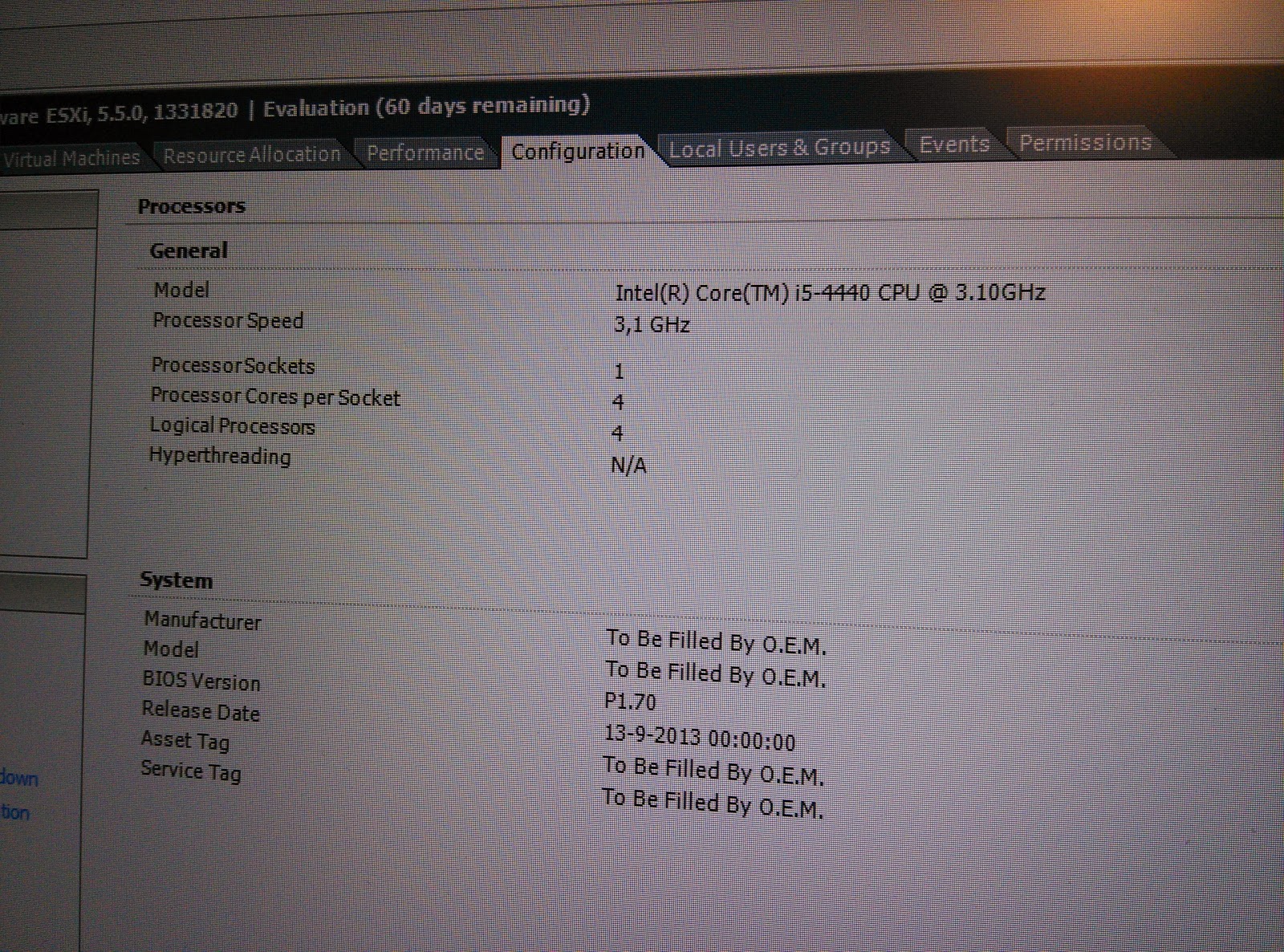

CPU: Intel Core i5 4440

A simple Intel Core i5 CPU with a Quad-Core running on a base frequency of 3.1Ghz. This should give us plenty of power to run many VM’s without a CPU bottleneck. The CPU also supports all of the Intel virtualization instructions including VT-x (with EPT) and VT-d for PCIe passthrough support. Price/Performance wise this was the best CPU to buy. An i7 adds nothing more then Hyper-Threading and 2MB L2 cache which both CAN result in a performance improvement of about 12% max (or lower) but does at at least 33% to the price.

I will be using the stock Intel cooler.

Memory: 32GB Quad-Channel CL9 1333 Kit

The Memory I bought for the older version of QuinESX is still good and since memory has gotten very expensive I did not buy a new 32GB kit but decided to reuse it. The only disadvantage is that the memory does not run at 1600Mhz, but this shouldn’t influence performance too much. So 4 x 8GB modules.

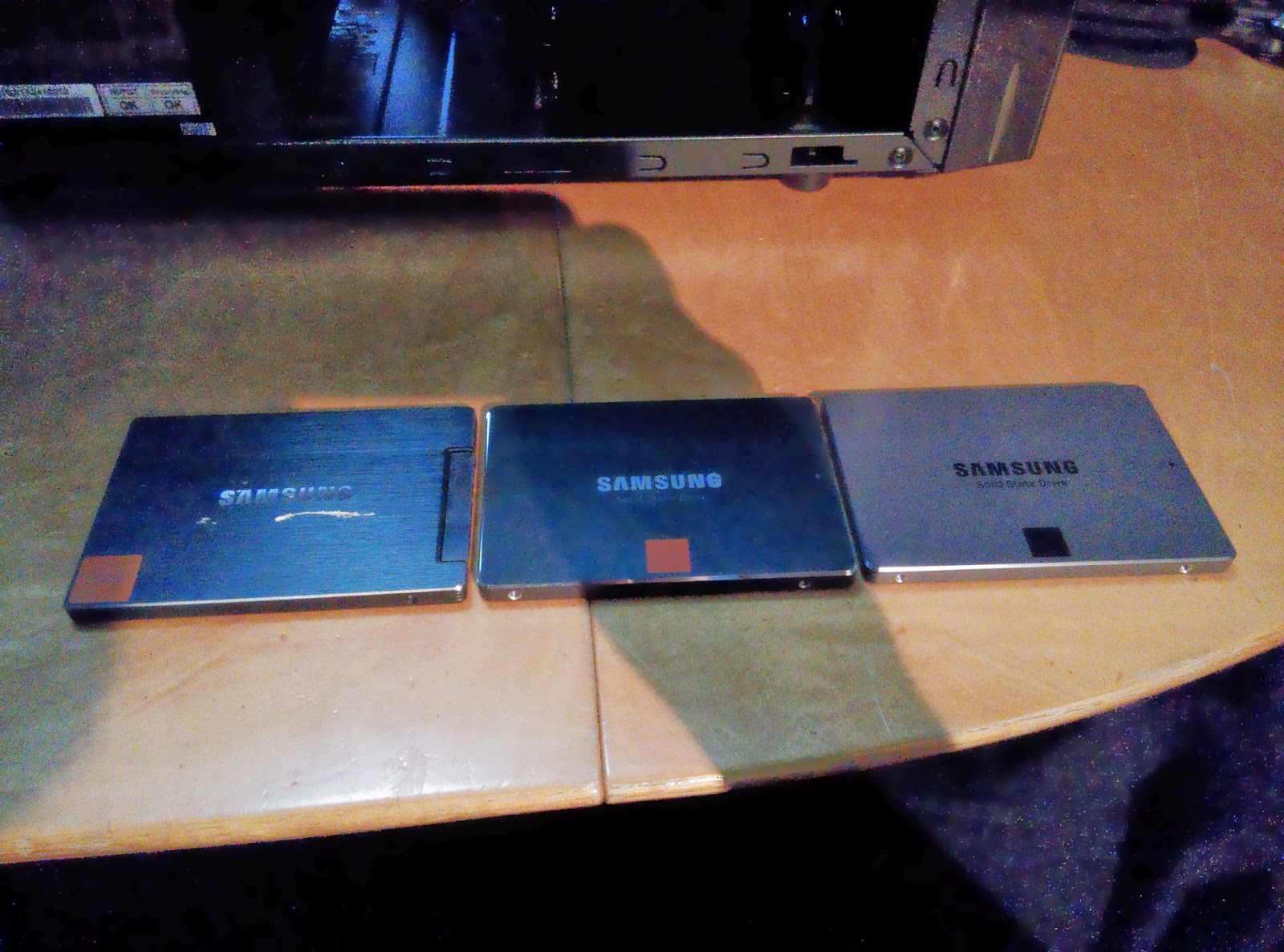

Storage: 3x SSD

– Samsung 830 256GB ~300E (from an older build)

– Samsung 840 500GB ~300E (from the previous QuinESXv7)

– Samsung 840EVO 500GB ~300E (new)

I decided to go SSD only for in the ESXi server. A big investment maybe, but these SSD’s, while not being the pinnacle of speed, offer a good 1.2TB of storage space. More then enough for any VM to run off, any large data sets needed would be used from my storage server anyway. With the 256GB SSD I wish to experiment running vFRC but will most likely end up using it as a DataStore also.

The 830 should last multiple PetaBytes of writes and both 840’s should last ~1.2PB of writes. So I am not afraid of killing them anytime soon. They also come with a 3 year warranty so I believe it’s a good investment to buy the bigger sizes because they will cost you less over time, give you more space and performance and will last longer (because of the extra cells.

One SSD, even these cheaper ones already has the IOPS of 50 disks combined, three should provide me with a mountain of IOPS and never have me waiting on disk! 😉

The 840EVO also features a very interesting design which has a triple layer storage system. It has 512MB of RAM as the first tier, then 6GB of SLC memory (VERY fast writes, lasts very long) as the secondary tier and the rest of the memory is TLC. If you stay within the 6GB of the SLC buffer, write performance should always be great and you won’t suffer any adverse effects of the SSD having TLC memory instead of MLC or SLC. After using it for a while, very recommended!

Network:

The server has the mentioned onboard Intel I217v NIC. This NIC is not natively supported by ESXi and would need a customized ESXi ISO. Now a days this can be easily achieved using a tool called ESXi-Customizer. To activate the NIC you will need the following VIB file (net-e1000e-2.3.2.x86_64.vib) which I have hosted on my FTP server. A more detailed guide on how to install it will follow below.

(I did not create this network driver, there is several guides on the internet on how to compile them and several people offering them for a download. Thank those people for their work!)

Next to the onboard NIC I am currently using a dual-port Intel Pro/1000PT. These cards are on the HCL of VMware ESXi and thus work out of the box. No custom ISO needed.

Since the Pro/1000PT is already a few years old I wanted to try something new and ordered an Intel 350-T4 Quad-NIC from Ebay for about 125E. This is the newest, biggest, baddest 1Gbit NIC intel has with full virtualization offload support, etc. I’m hoping it will improve transfer rates and lower CPU utilization at the same time! 😀

Well now, how does this all look when put together? See the stream of pictures below, my comments will be added in between.

The bare chassis, nice and clean layout!

The two fans have 3 pin connectors, would have liked 4 pin PWM, but at least they are not PSU only! Cooling power is good, noise is good (for the price)

This little awesome tool is included with the AsRock board, brilliant way to enable you to screw in your standoffs

Insert CPU

Insert Memory (Different Memory was used, this memory ended up in the ZFSguru machine)

Since the age of SSD’s, placing storage isn’t a big deal anymore. No more heat, weight or other annoyances to deal with… Let’s see!

3 SSD’s in order of Generation

Back side of the SSD’s

What’s this? Screw holes on the bottom of the case which just might be enough to hold my SSD’s. Note that the HardDrive retainer is fixed with normal screws and not riveted in. Very nice!

Yes, it works brilliantly. Only able to use one screw (the rest don’t line up) but that is no problem with SSD’s!

Snug in place, out of the way, excellent!

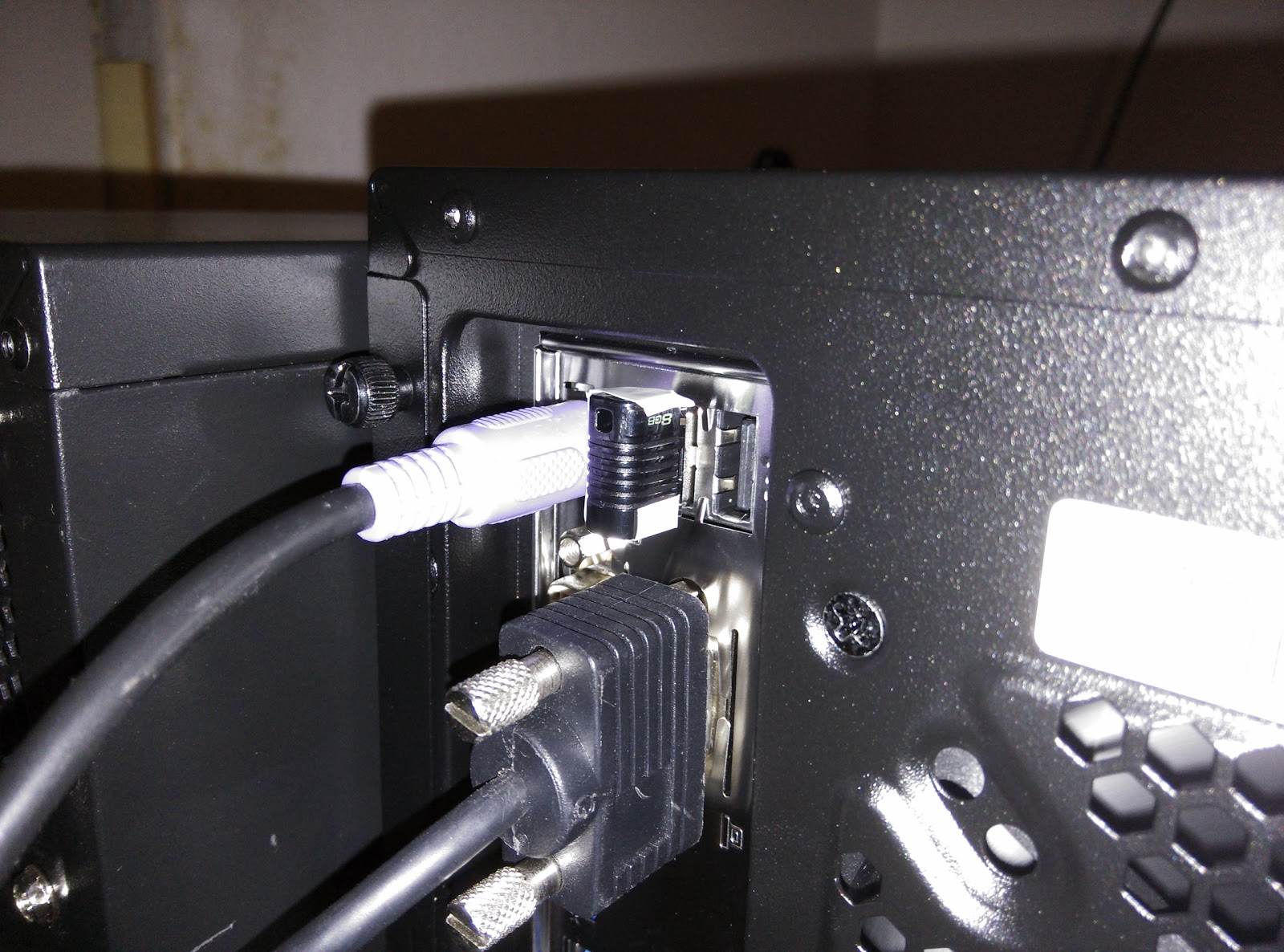

The motherboard is in, everything is hooked up and fairly cable managed

The back provides room to hide some of the cables. At first I was scared that the room behind the motherboard would be too shallow, but the case lids actually have a pre-formed buldge in them to compensate for this. Very well designed!

Everything is in place, ready to be turned on

The USB stick I use for my ESXi install, it’s an Kingston DataTraveler Micro 8GB Black

An important thing to note, the stick does not stick out further then the case thus can be left in place when moving the machine to a LANparty or whatever

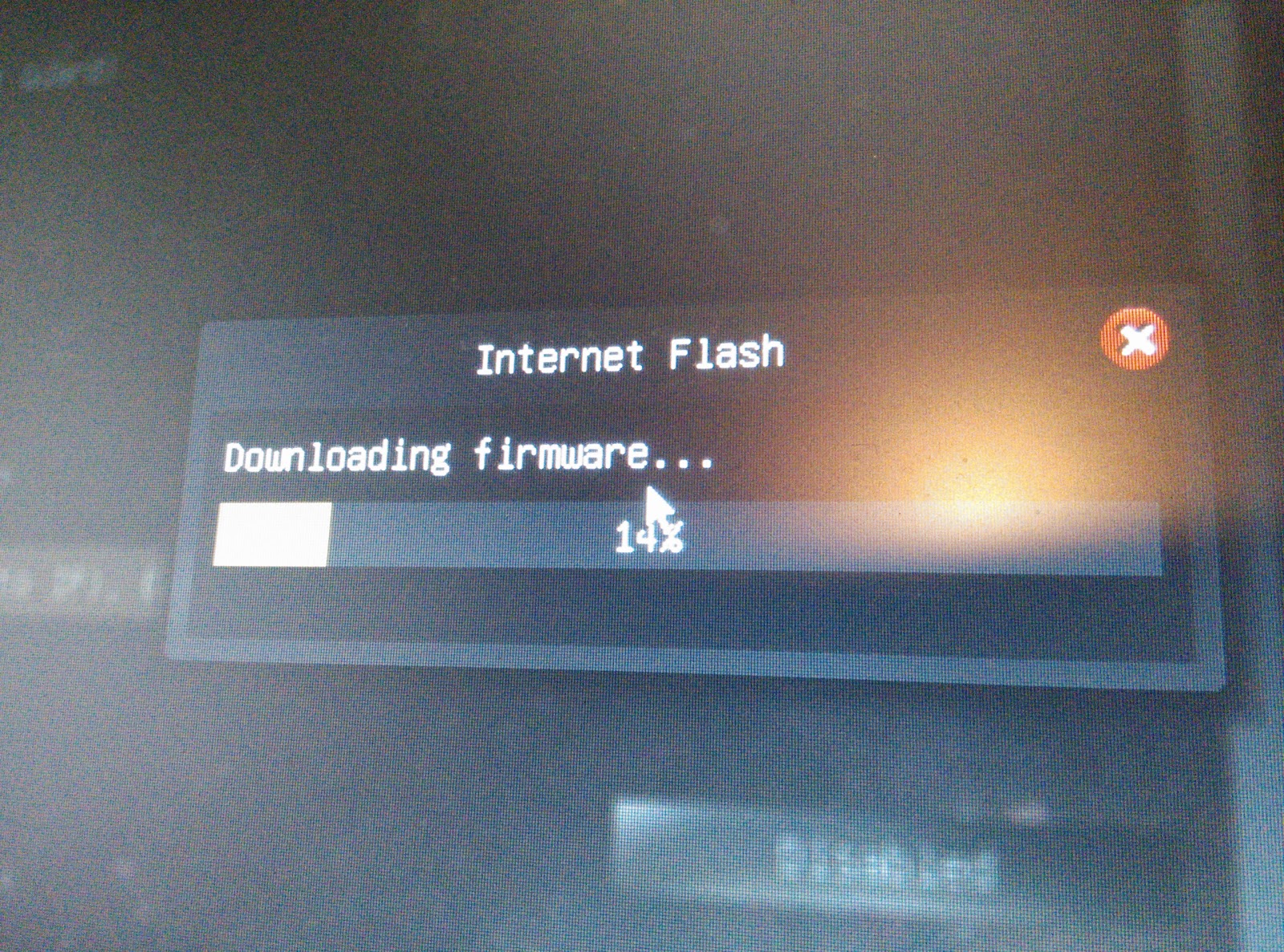

BIOS:

Asrock has a ‘in bios’ automatic flash tool which downloads the newest firmware for you automatically, in the BIOS, most awesome feature ever! Be sure to update before changing your settings!

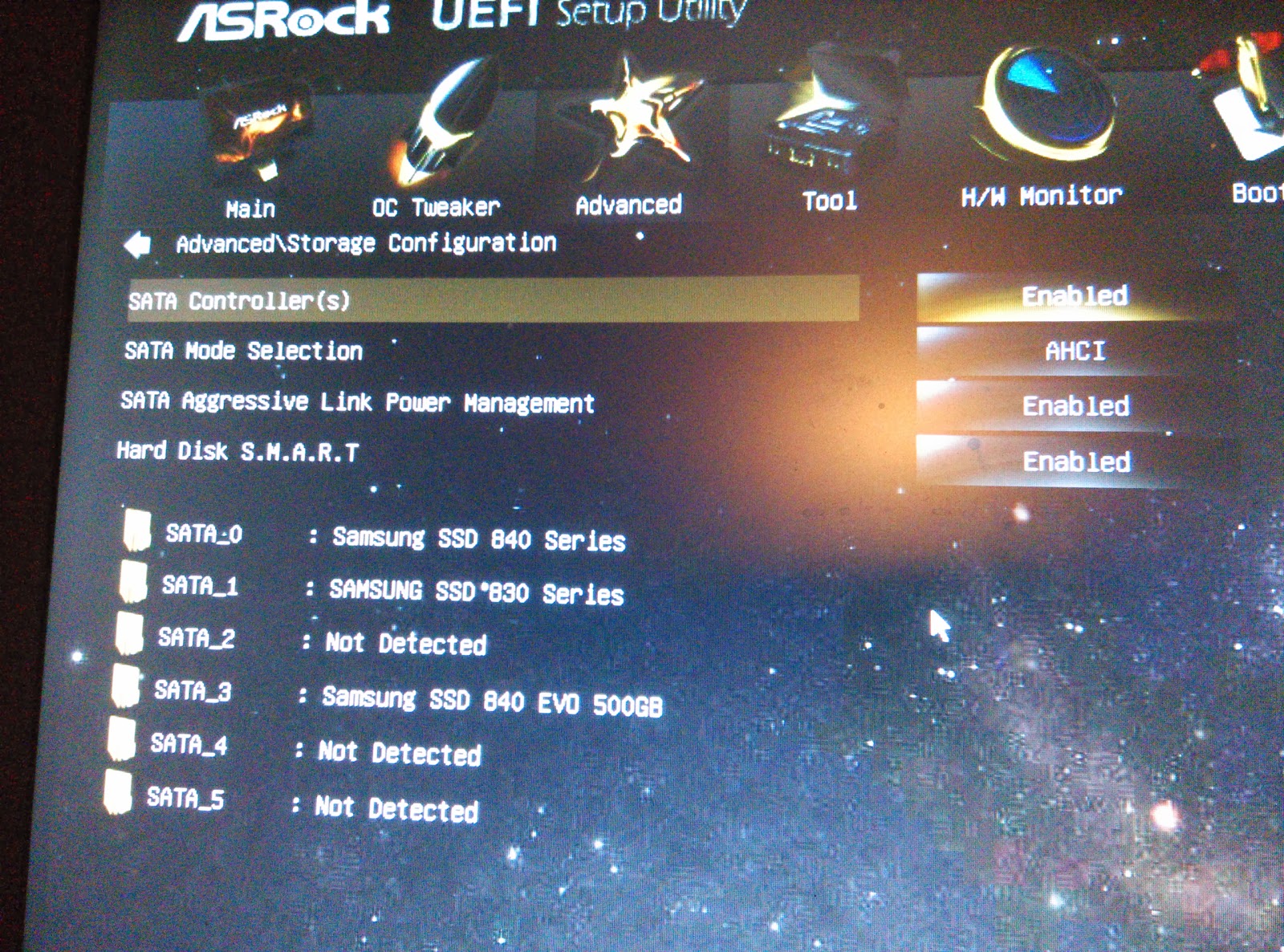

All SSD’s detected

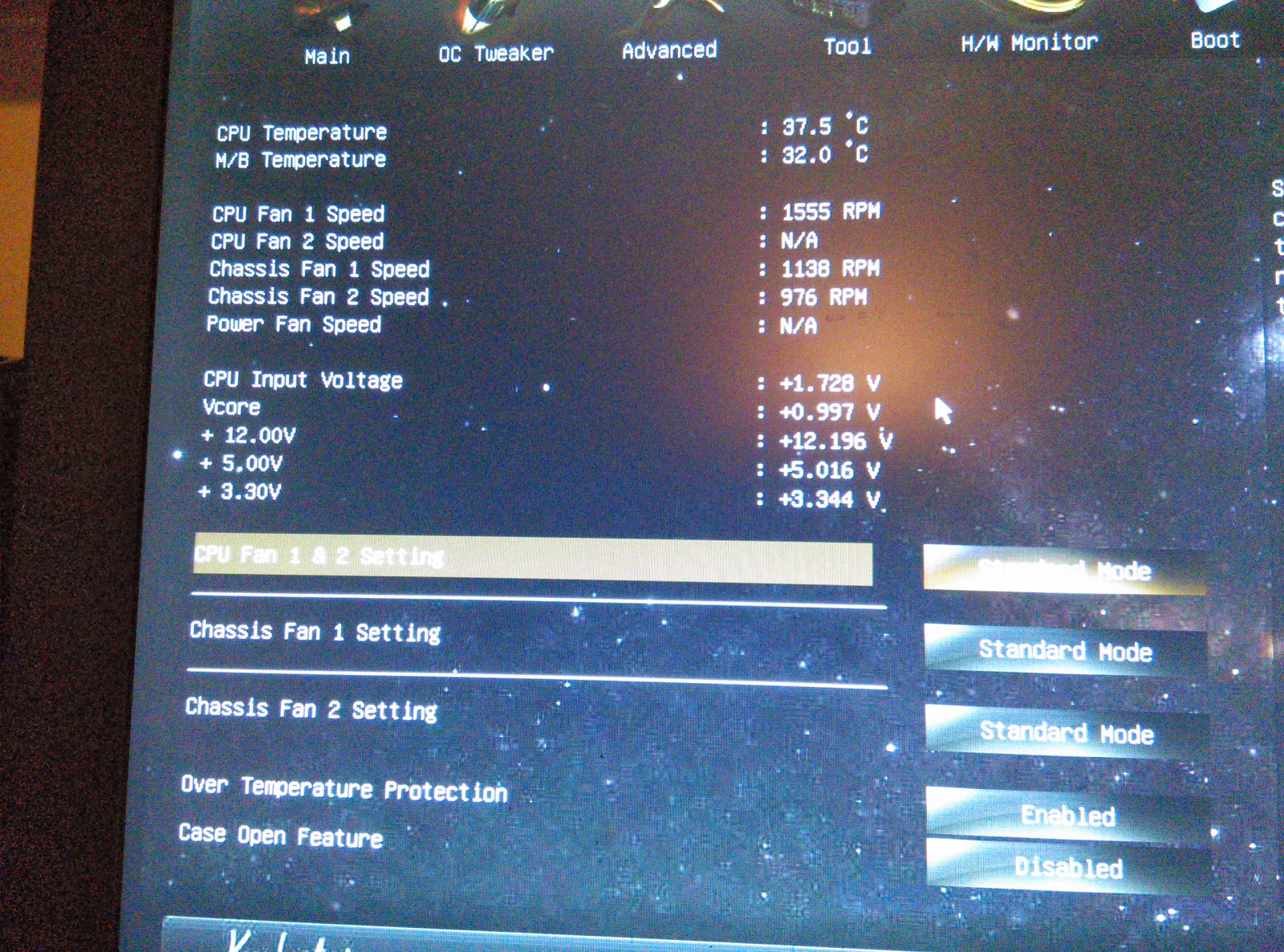

Enable FAN control (and check that they are working). Idle in bios for 5 minutes at this screen to make sure the cooling is working correctly

Make sure all other settings are set as you wish them but at least:

– Set Storage to AHCI

– Enable all virtualization options in the CPU menu

– Disable any automatic power down features (‘Green’ Power control is ok)

Installing ESXi:

I will not detail the installation with lots of screenshots. More then enough (good) guides can be found on how to do this. I will list the basic steps you need to go to.

1. Prepare 2 USB sticks of at least 4GB

2. Download VMware 5.5 ISO from the VMware site (Register if you wish to run the free version) and also download a tool called unetbootin

3. Insert the first USB stick and open unetbootin

4. In unetbootin in select your USB stick as the target device (If it fails, try and format your USB stick with fat32) and select that you wish to use an ISO. Select the VMware 5.5 ISO and let it do it’s magic. Depending on your USB stick this will take anywhere from 15 seconds to 2 minutes

5. Go to your newly constructed VMware machine and try to boot off the stick. If this works, power down the machine and insert the second USB stick next to the first one. The second stick is where we will be installing VMware ESXi 5.5

6. Boot from the first stick again and Install VMware ESXi to the second stick. If all your networking stuff is in order (it recognizes your NIC) it should install without too much issues. Prior versions of the VMware installer would wipe any found storage but the correct version doesn’t do this anymore

If all is well and ESXi boots, configure it’s IP and everything on the console. Also enable SSH en remote shell login from the ‘troubleshoot’ menu.

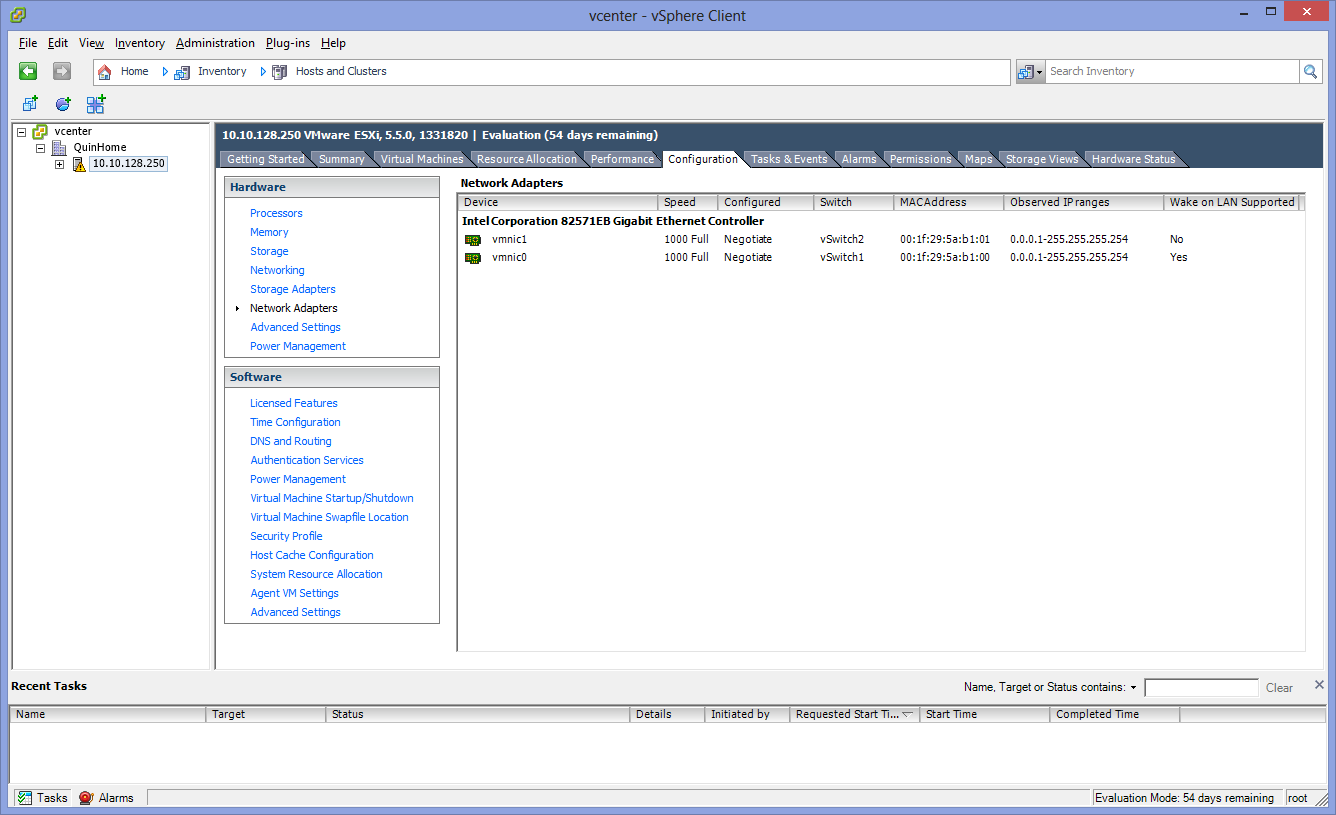

Connect with your ESXi to see if everything installed correctly. You should see something like the following:

All is well, don’t be alarmed by the “To Be Filled By O.E.M.” ESXi just reads a field in your BIOS that Asrock didn’t fill in. It does however list the correct BIOS version which comes in handy!

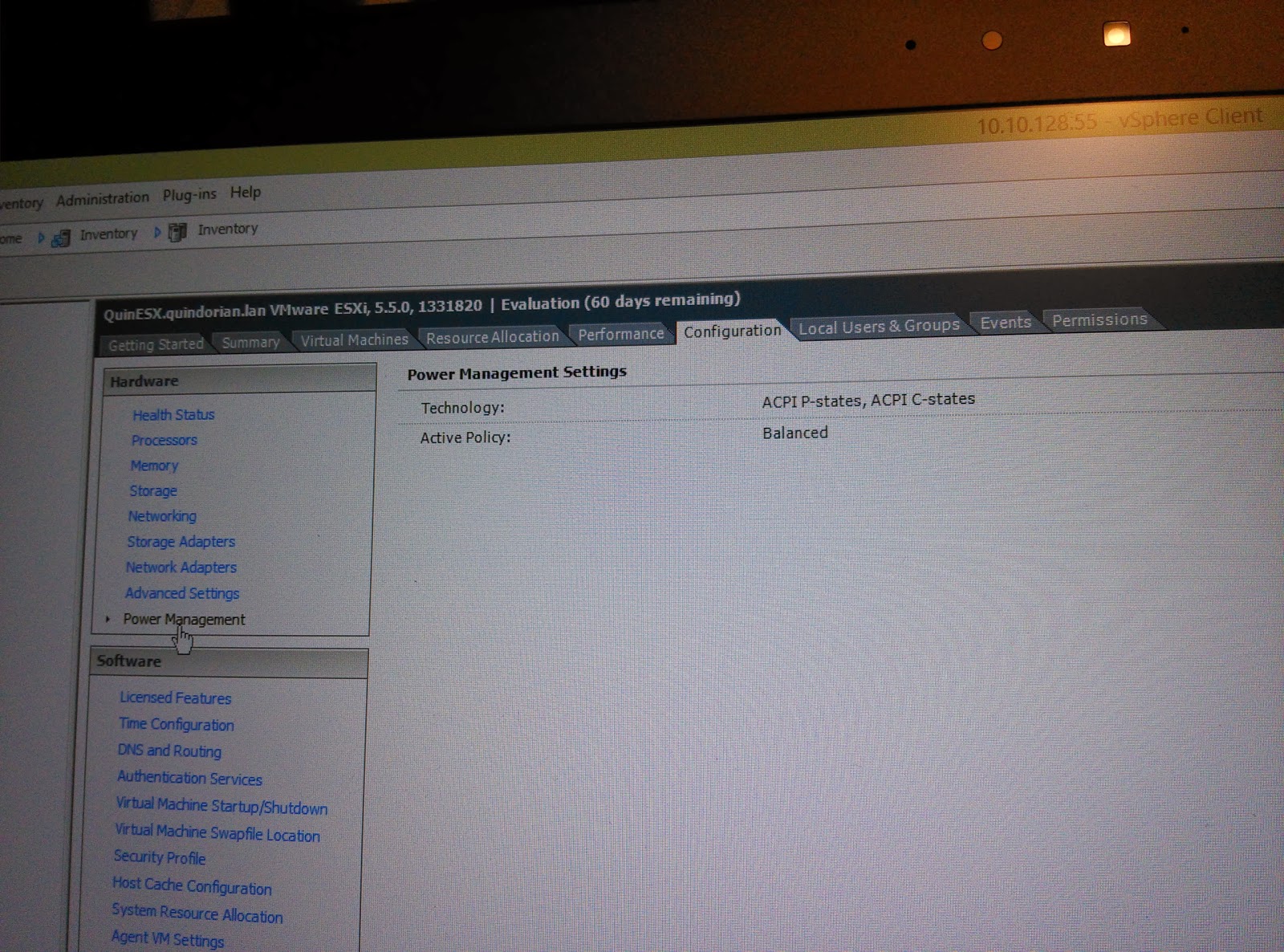

Power Management is recognized and activated automatically, excellent!

The two Pro/1000PT show up, but not the onboard I217V does not

To fix this we need to do the following:

1. Create a DataStore

2. Use the “Browse DataStore” option and create a folder called ‘vib’. Use the buttons in the same window to upload the extra VIB file which holds the drivers (net-e1000e-2.3.2.x86_64.vib)

Then either move to the console of the server or login through SSH which we enabled before. While on there use the following command:

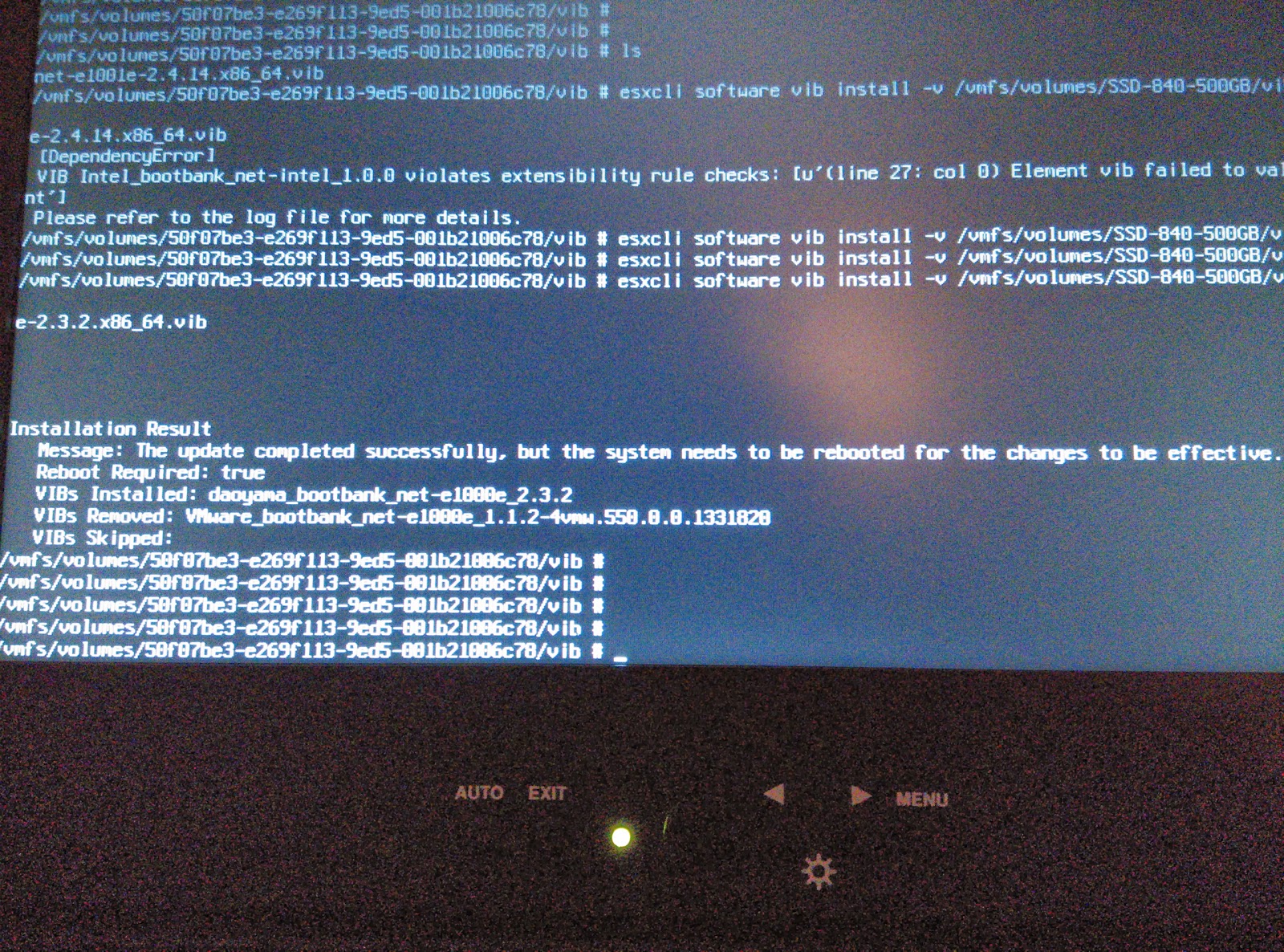

esxcli software vib install -v /vmfs/volumes/datastorename/vib/net-e1000e-2.3.2.x86_64.vib

If it works, it will ‘hang’ on that screen for a minute or two and then it will report back as seen on the following screenshot:

Succes, the driver installed!

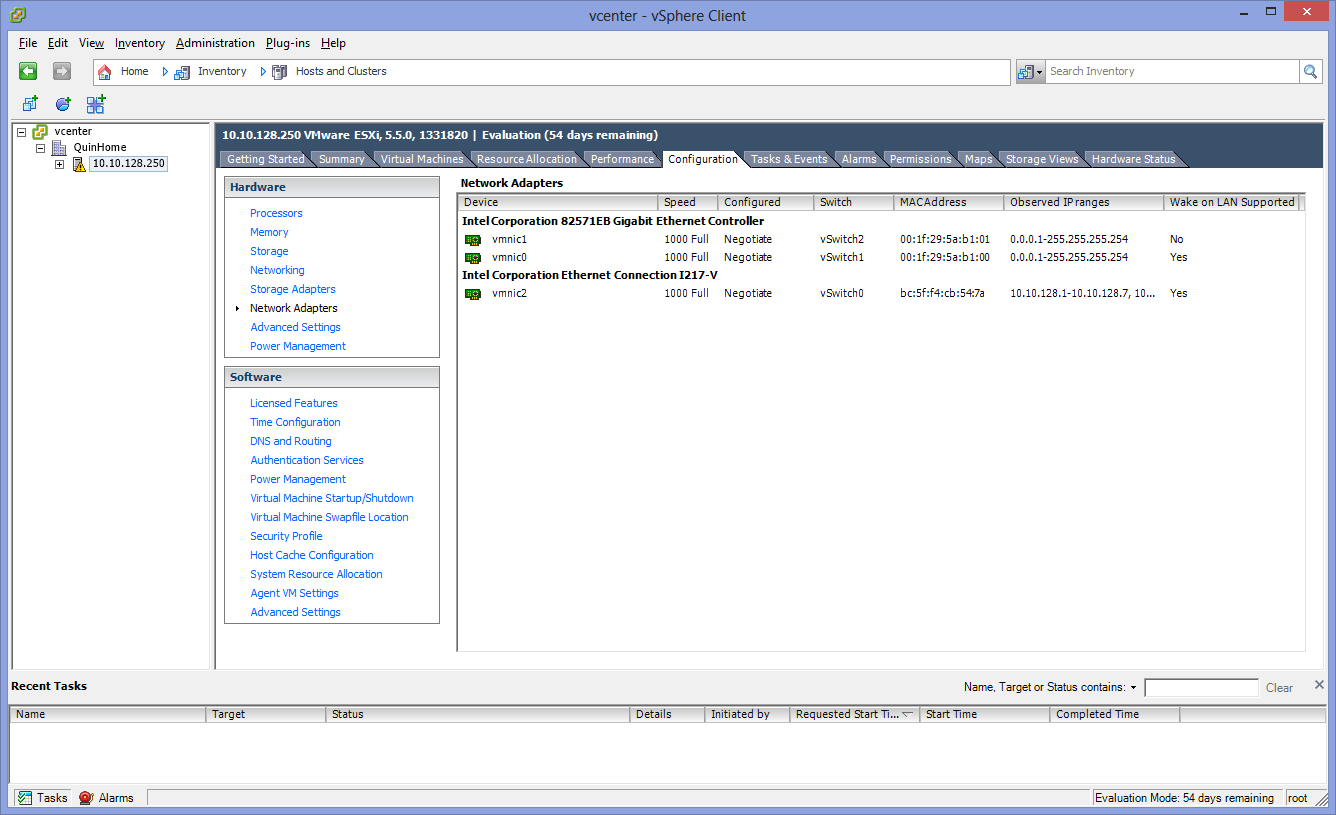

Now do a reboot of the ESXi server (ALT-F2, F12, reboot on the console)

When the server boots back up it should show the following:

Our network card shows up just fine

And that’s it, you now have a fully functioning ESXi server. First step I would advise is installing the newest version of the VMware vCenter Appliance since starting from VMware ESXi 5.5 all of the newly introduced features are only available through the vCenter Web Management Console. I can’t say I like it very much, but currently there is just no way around it. 🙁

As always, comments or questions welcome!

On to the next post: https://blog.quindorian.org/2013/11/home-serverstoragelab-setup-part-3-x.html

Hi, I have only I217-V onboard NIC on my ASRock MB. The NAS4Free VM performance is bad. I get only 90Mb/sec (11MB/sec) data transfer. Have you tried this NIC assigned to one of VM and see the performance? Please let me know.

GThanks a lot for post and nice system.

Jay

Hi Jay, Thank you for your kind comment!

About your question, I have had no problems achieiving the a Full Gigabit in an ESXi guest (Windows or Linux) going through the i217-V NIC. Your number seems quite close to 100Mbit instead of 1000Mbit, maybe your switch is negotiating wrong?

Are you using VMXNET3 inside of your VM's? That is the best NIC to use, not saying the Intel E1000 will not do it , but the VMXNET3 is better anytime.

Hi,

I had the same issue with my ASRock MB/I217-V NIC. I swapped the old cable for one with certified for Gigabit, and the port now reports 1000M.